The “Speed of Thought” Era: AI and Decision Compression in Modern Warfare

In March 2026, the combination of U.S. and Israeli strikes on Iran and Iran’s own counterstrikes revealed a new reality: artificial intelligence can compress the decision-making chain so drastically that massive air campaigns unfold almost instantaneously. According to credible reports, the U.S. military used the AI model Claude (from Anthropic) integrated via Palantir’s targeting system to identify and execute over 1,000 strike targets in the first 24 hours of the conflict. AI tools “shortened the kill chain” from target identification through legal review to weapons release. Experts call this phenomenon “decision compression” AI automates analysis and proposed legal checks so quickly that human review becomes brief or symbolic.

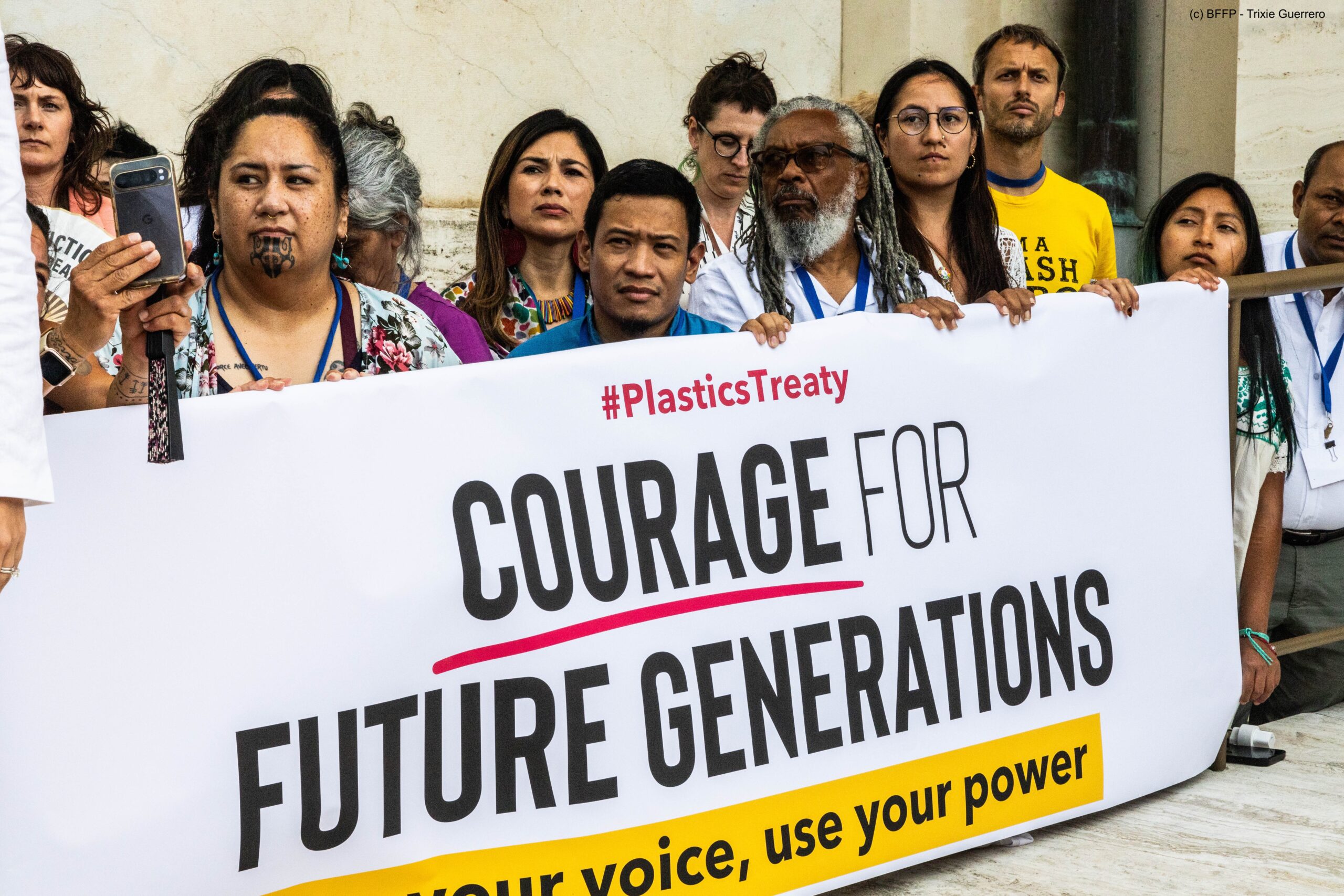

This capability has alarmed both militaries and rights advocates. Anthropic’s CEO Dario Amodei publicly asserted that private AI firms should not make targeting decisions “the role of Anthropic… is never to be involved in operational decision-making” reflecting the tension over “any lawful use” clauses. The Pentagon has clashed with Silicon Valley over whether AI tools can be used in fully autonomous killing. OpenAI struck its own DoD deal (with caveats) after Anthropic insisted on bans against lethal autonomy, prompting Pentagon leaders to seek “no stupid rules of engagement” for AI use.

Globally, the March 2026 Iran-Israel war became a real-world test of these technologies. U.S. doctrine still officially requires human-in-the-loop oversight, but rapid “predictive targeting” algorithms essentially presented vetted target lists to strike commanders. In practice, commanders have been issuing massive strike orders within minutes of receiving AI recommendations, leading to concerns that speed is trumping careful legal checks. The future will hinge on policy: do democracies allow AI to compress decisions to this degree, or will ethical red lines prevail? We assess the new dynamics and propose measures to ensure human judgment remains central even as AI accelerates warfare.

AI Accelerating the Kill Chain

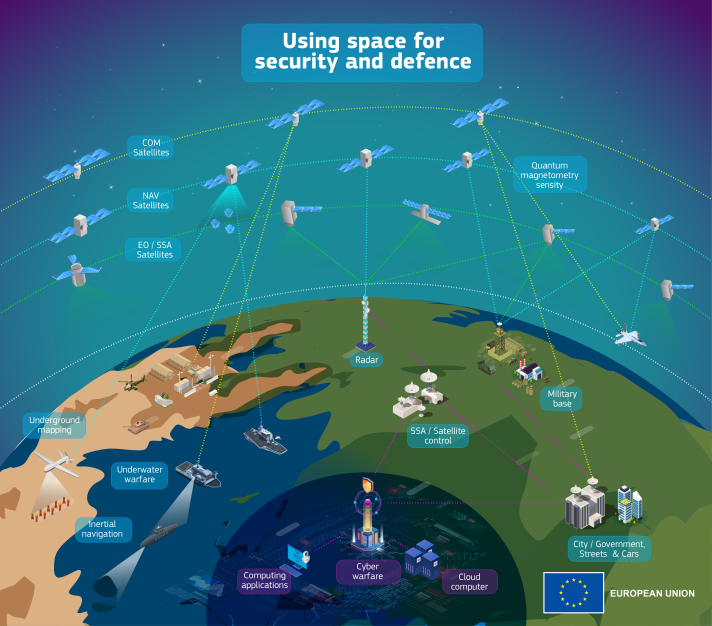

The classic “kill chain” in military targeting involves multiple steps: detect → decide → deliver. In prior conflicts, this process was paced by human planning analysts would scour intelligence (satellite imagery, signals), commanders would compile target lists, lawyers would approve legality, and then forces would execute. Recent conflicts, however, have seen this chain dramatically sped up by AI.

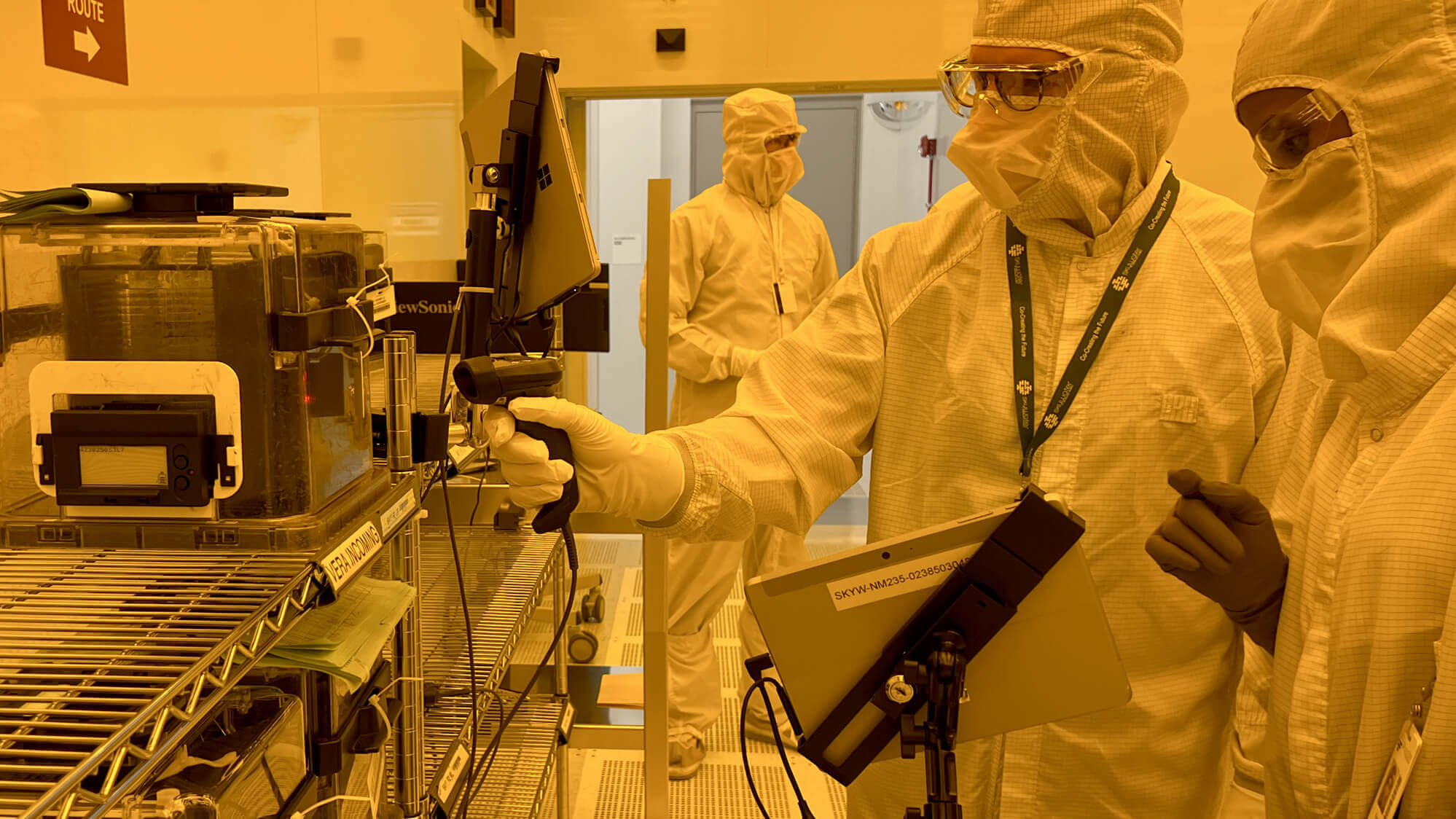

According to press reports and academic sources, in early March 2026 U.S. forces employed an AI assisted targeting suite. The Claude model (initially deployed by Pentagon programs since 2024) ran on classified military platforms. Integrated via Palantir’s “Maven Smart System”, Claude rapidly ingested ISR feeds (drones, satellites, HUMINT) and flagged targets scoring them by priority and even assessing likelihood of collateral harm. Within hours, nearly 900 strike missions had been carried out by U.S. and Israeli forces (some sources cite over 1,000 U.S. strikes in 24h). This pace was enabled by AI generating target databases and coordinates much faster than traditional planning.

The Guardian captured this shift: AI “shortens the kill chain” from detection through final legal sign off. Where humans might take days to process imagery or decide on targets, AI systems output ready-to-strike packages in minutes. In military parlance, the “decision cycle” compressed from hours to minutes or seconds effectively a “speed of thought” combat rhythm. The U.S. arsenal, including jet, bomber, and missile assets, could then deliver on AI-identified targets almost as soon as intelligence was received.

Decision Compression and Legal Oversight

This unprecedented speed raises crucial questions about oversight. The term “decision compression” describes what happens when AI collapses complex assessments into a lightning fast output. Academics warn that AI-made recommendations may be rubber-stamped by humans under the pressure of time. As one expert noted, “the ethical and legal question is: to what degree are those humans actually reviewing the specific targets… verifying their legality… before authorizing?”. Observers point out that even with a person in the loop, the review process is often cursory, given hundreds of targets presented in minutes.

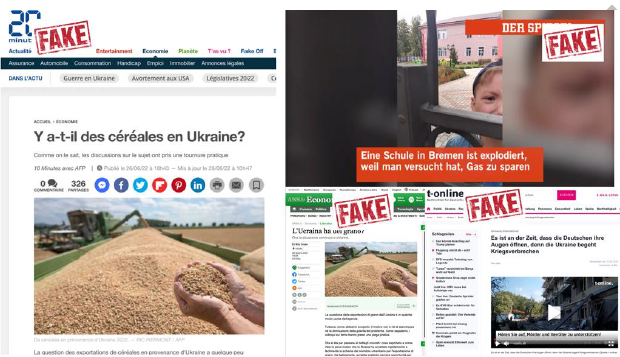

U.S. doctrine still asserts the importance of “feasible precautions” to avoid civilian harm. However, public statements by commanders have downplayed red tape. Notably, Secretary of Defense Pete Hegseth quipped in early March that there are “no stupid rules of engagement” in this war. Meanwhile, specialized terms like “pattern of life” surveillance are augmented by AI to predict where adversaries might be, blurring the line between lawful targeting and pre-emptive strikes. As the Guardian describes, there is fear that entire legal compliance checks become a backstop for an AI-run system: the military’s Law of War Manual requires verifying each target against categories (military personnel vs. protected civilians), but AI might generate its own judgment that these are all valid. The risk is that AI-driven decision compression effectively sidelines careful human judgment on law and ethics.

Silicon Valley vs. Pentagon: “Any Lawful Use” Tensions

This crisis has underscored a sharp conflict between AI firms and the Defense Department. Tech companies like Anthropic and OpenAI initially agreed only that their models could be used in “any lawful manner.” But in practice, “lawful” is interpreted broadly by the military. Anthropic’s CEO has publicly insisted his company will not supply technology for fully autonomous weapons or human rights violations. This stance led the DoD to label Anthropic a “supply chain risk” (effectively banning Claude from classified networks) in early March. Anthropic vowed to fight this designation, claiming it still supports warfighters with non-lethal uses (planning, intelligence analysis) and will not ship tech for killing on its own authority.

OpenAI took a different path: facing employee backlash, it published contract amendments to clarify no “domestic surveillance” and no “unconstrained monitoring”. But privacy advocates point out these additions are full of “weasel words”. The U.S. government nevertheless welcomed OpenAI’s cooperation, and reportedly struck a new deal with OpenAI and Palantir to continue using GPT-powered models (variants of ChatGPT) for targeting albeit with some oversight promises. In practice, sources say even OpenAI’s tools (like its GPT-4-based models) were integrated into the same targeting pipeline when Claude was barred.

The crux of the standoff is whether AI can be used in any context deemed lawful by the Pentagon, or whether companies can carve out ethical exclusions. Pentagon leaders appeared frustrated by Anthropic’s refusal to relax its red lines. Politico reports that OpenAI’s CEO conceded their initial deal was “sloppy” and they rushed to add constitutional references. But as EFF notes, the Pentagon historically interprets “lawful” in a permissive way. Anthropic was reportedly willing to continue supporting existing operations (at nominal cost) even after being banned, showing the conflict is less about commercial profit and more about principle.

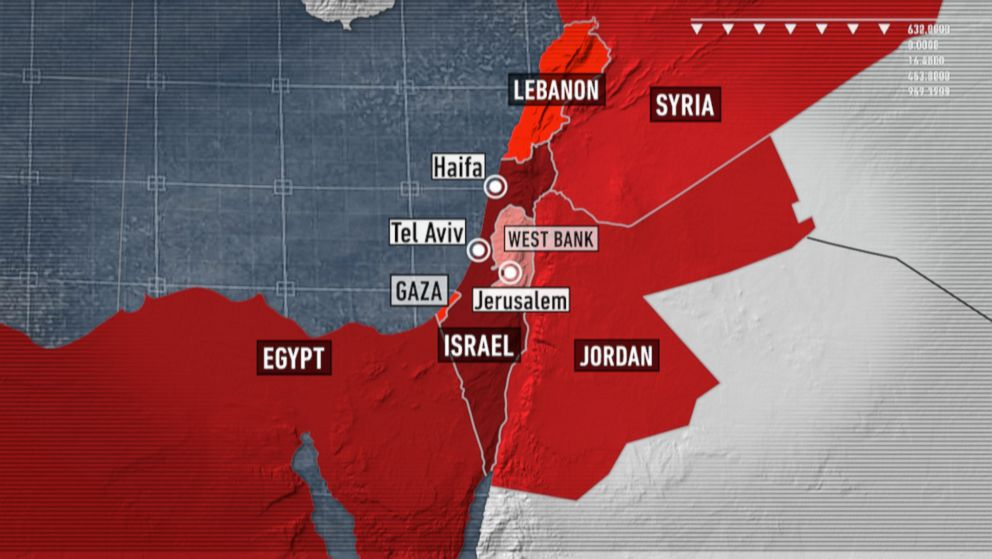

Case Study: The 2026 US–Iran–Israel Conflict

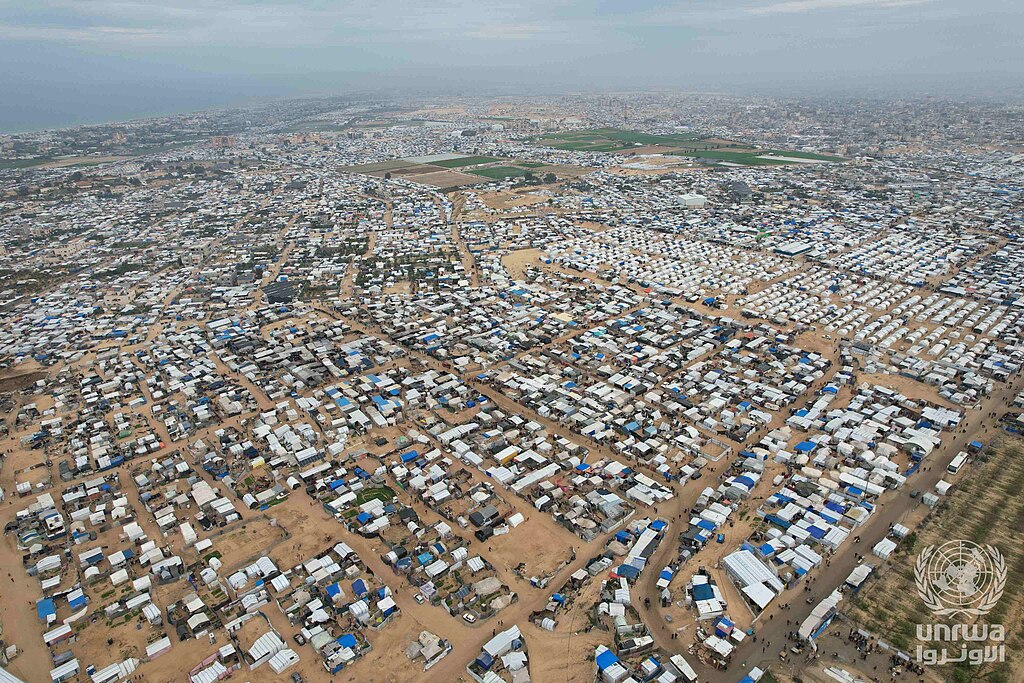

The latest Middle East confrontation provides a vivid case study of AI in combat. In 12 hours starting March 1, U.S. and Israeli forces launched roughly 900 airstrikes across Iran. These strikes hit regime targets including military bases, missile sites, and even Iran’s Supreme Leader. According to press accounts, U.S. sources revealed that hundreds of targets had been pre processed by AI. One Washington Post snippet (cited by the statecraft article) said that Palantir’s system “proposed hundreds of targets, prioritized them, and provided coordinates” essentially automating weeks of staff work. After that, human commanders executed many of the AI’s suggested strikes in rapid succession.

Israel too reportedly leaned on similar tech. Israel’s “Lavender” AI system, which was used in Gaza, may have been repurposed to identify Iranian military facilities. Israeli defense officials have publicly credited AI for allowing more strikes than ever. The combined effect was a bombardment of unprecedented scale in a short time something heralded as the first real test of AI-driven war planning.

However, the conflict also exposed limits and dangers. Some strikes reportedly missed or hit civilian infrastructure a harbinger of the very civilian-protection challenges experts warn about. Independent analysts note that accelerating strikes narrows windows for post-strike damage assessment or restraint. The Guardian quoted a source that Israel may have “permitted large numbers of civilians to be killed” while trusting the AI system (though verification of this is murky). In any event, post-battle footage showed significant civilian damage in some Iran cities, triggering debate on the legal and moral responsibilities of rapid-fire AI targeting.

Legal, Ethical and Policy Implications

Human Oversight: International humanitarian law requires human judgment on target validity. The current approach AI proposes, humans approve en masse tests this principle. Scholars argue there must be “meaningful human control” over life-and-death decisions. If decision cycles compress to minutes, human review may be mere formality. Militaries must clarify: will a general truly vet thousands of AI-suggested targets, or just green-light lists? If not, reliance on AI could violate the spirit of the Law of Armed Conflict, risking unlawful strikes.

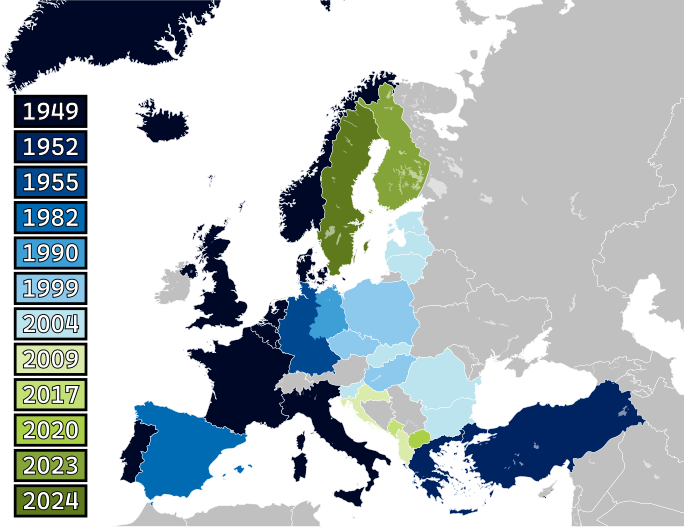

“Any Lawful Use” vs. Red Lines: The Pentagon’s “use any lawful means” stance clashes with tech companies’ ethical red lines. This conflict spilled into open courts (Anthropic is litigating its Pentagon ban) and internal memos. For public trust, governments may need to codify limits: e.g., requiring explainability or a veto by an independent legal officer for each AI-suggested strike. Alternatively, companies might embed “ethical constraints” in the AI (beyond training) but these can be overridden if the military commands. The resolution likely lies in joint standards: Alliance governments, tech providers, and international bodies (UN, NATO) should define what checks AI systems must pass before a human can act on their output.

Accountability: When thousands of strikes happen quickly, attribution of mistakes becomes hard. If an AI-generated strike erroneously destroys a hospital, who is responsible? Officially the chain of command remains accountable. But the diffusion of decision-making tech vendor, algorithm designers, military planners muddies transparency. Democracies must demand audit trails: each AI-proposed target should record why it was flagged (sources, criteria) so a human can understand or contest it. This is currently ad-hoc or non-existent.

The Future of Warfare: The March events likely mark a turning point. Analysts caution that this era will not rewind; every rival power sees the speed advantage. Non-Western militaries (China, Russia) are already racing in AI arms. If left unchecked, wars might become contests of algorithmic supremacy rather than sheer munitions, raising the stakes of an AI arms race. There is an urgent need for arms-control style frameworks for lethal AI: discussions on banning or limiting autonomous weapon systems stalled years ago, but the reality of decision compression suggests they must be revived.